Why I've Stopped Using ChatGPT - And Why This Concerns All of Us

Some decisions feel sudden. This one has been building for years.

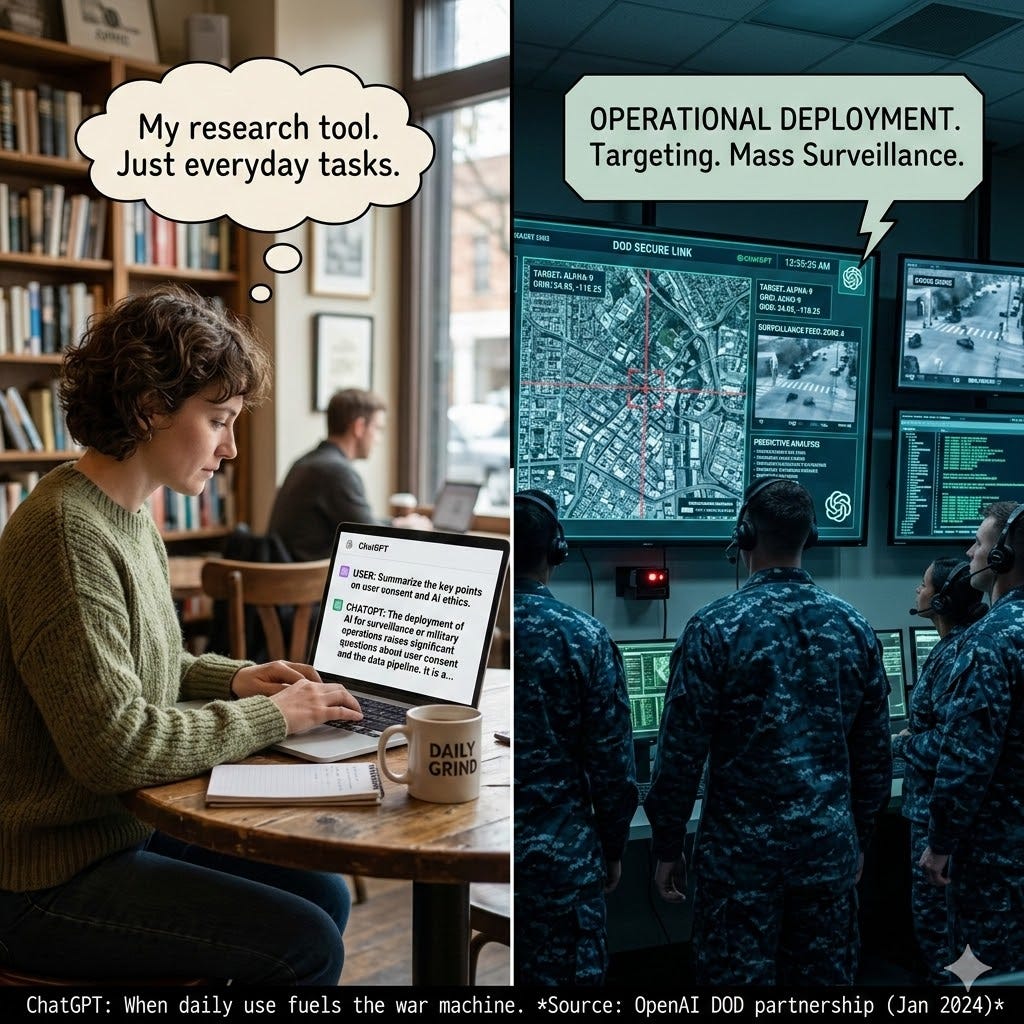

What if every prompt you typed into ChatGPT was helping refine the engine of the American war machine?

That is not a hypothetical. It is now the reality.

Over the weekend, OpenAI formally agreed to deploy ChatGPT across all three million US Department of Defense personnel. The model that dominates 81% of the global AI market is now embedded in Pentagon classified networks via a platform called GenAI.mil.

Mass surveillance and military operations are now legitimate use cases for the technology four in five AI users rely on daily.

This did not happen by accident. OpenAI quietly removed its prohibitions on military use in early 2024. Google and xAI followed. The reward for compliance was swift: hundreds of millions in government contracts.

Here is what you need to understand about how this affects you.

Your data is not neutral

Let me be clear about how this works. Your prompts about business strategies, creative projects, or professional thinking do not directly fly drones. However, every interaction you have with ChatGPT helps refine its core intelligence. That core intelligence - honed by our collective labour, ideas, and data - is the foundation upon which its military applications are built.

Think of it this way. ChatGPT is a vehicle. Our prompts are the fuel. OpenAI is now using that same vehicle, refined by our fuel, to transport military cargo. The engine runs better because of us. For everyone, including the new driver.

Our feedback loops are now embedded in that machinery.

Whether we consented or not.

Does paying protect you? Not in the way that matters.

If you subscribe to ChatGPT Plus, Pro, or Enterprise, OpenAI will not use your specific prompts to train its public models. That is a real privacy protection - against the company itself.

However, it offers no shield against the state.

OpenAI complies with government warrants for user data. It actively monitors conversations for “threats” and reports users to law enforcement. CEO Sam Altman has explicitly warned that your chats lack legal confidentiality protections. The first known federal warrant seeking ChatGPT user data was issued in 2025. OpenAI complied, handing over conversation histories, account details, and payment data.

Paid or free, your data sits on servers accessible to the same national security apparatus now formally embedded in the Pentagon. The subscription fee buys you out of being training data. It does not buy you out of being surveilled.

The company that said no

The contrast could not be starker.

Anthropic, the maker of Claude, held two firm non-negotiable lines: no fully autonomous weapons systems - weapons that kill without human decision-making - and no mass domestic surveillance of civilians. They were willing to work with the Pentagon but drew those ethical red lines.

Anthropic refused to remove those guardrails. The Trump administration responded by declaring the company a “supply chain risk” - a designation normally reserved for foreign adversaries - and ordered all federal agencies to cease using their technology.

The strikes happened anyway.

On February 28, 2026, the US military launched Operation Epic Fury - a joint offensive with Israel striking Iranian nuclear facilities and military infrastructure. According to the Wall Street Journal and Axios, the Department of Defense used Anthropic’s Claude AI models during this operation for intelligence assessment, target selection, and battlefield simulations.

This happened just hours after Anthropic was officially banned from government work. It happened while Anthropic was still refusing to drop its safeguards against autonomous weapons and mass surveillance. It happened despite Pentagon assurances that it had “no interest” in using AI for targeting.

The military was granted a six-month phase-out period because Claude is currently the only AI model authorised to interact with classified Pentagon systems.

The ethical lines Anthropic tried to draw were crossed before the ink dried on the ban.

A company that refused to enable autonomous killing and warrantless surveillance was treated as an enemy of the state. The companies that said yes were handed hundreds of millions. And the company that said no had its technology used for precisely the operations it was trying to prevent.

That is not a slippery slope. That is the established reality in the absence of mandatory regulation.

Why this concerns you

ChatGPT controls approximately 81% of the global AI chatbot market. Over 92% of Fortune 500 companies use OpenAI products. When a single platform dominates four in five AI interactions worldwide and that platform is now formally embedded in military and surveillance operations, this is not a niche tech story.

It is a democracy story.

It is a human rights story.

It is also a story about the privatisation of war, where our daily tools and our data become a subsidised resource for the military-industrial complex.

Australia has 77% of its population wanting AI regulation and 86% wanting a dedicated regulatory body. The Australian Government spent 15 months and $188,000 finding AI experts. Then scrapped the body. Our federal government continues to treat urgency as optional while this unfolds in real time.

The limits of personal choice

I work with a range of AI platforms deliberately - from the United States, China, and France. Not because any single country offers perfect safety, but because diversification itself is a form of risk management.

Does this make me immune to the concerns I have raised? Absolutely not.

Any US-based company can be compelled by the US government. That is the law. If the Department of Defense demands data from Anthropic, Google, or any American AI firm, those companies must comply or face consequences we have now seen play out in real time.

Chinese AI platforms come with their own profound concerns - state surveillance, censorship, and a different but equally troubling authoritarian framework. I do not pretend otherwise.

French and European platforms operate under GDPR and a different legal tradition, however they are not immune to pressure, especially as NATO allies.

So why diversify at all?

Because concentration risk is real. Putting all of my thinking into a single platform like most people do - especially one now formally embedded in the US military-surveillance complex - is unacceptable to me. Diversification is not a shield. It is a hedge. It spreads exposure rather than consolidating it.

More importantly, my personal tool choices are not the solution. They are a signal.

The ultimate answer is not which chatbot I use. It is mandatory regulation - laws that protect these red lines for every company, in every country. Voluntary codes of conduct failed the moment OpenAI removed its military ban and Anthropic was punished for holding its guardrails.

My divestment from ChatGPT is a values-based decision. But values without laws are just preferences. We need legal frameworks that make it impossible for any company - American, Chinese, or European - to drift into autonomous weapons and mass surveillance by default.

That is the conversation we should be having.

What I am doing

I have stopped using ChatGPT entirely. Not as a gesture. As a values-based decision.

I am not abandoning AI. I remain a committed advocate for Human-AI Collaboration as a force for human good. But I will not pour my thinking into a machine now formally embedded in the machinery of state power.

Every user deserves to make that choice with full information. The question is whether you now have it and what you will do with it.

The answer is not just about which AI chatbot you use. It is about what kind of technological future we are willing to build. And whose hands we are willing to put it in.

I have made my choice.

What will you do with yours?

You know what to do.

Onward we press

Here’s my radio interview with Mike Jeffreys from 2GB overnights

Disclaimer: The views expressed in this article are my own and do not necessarily reflect the positions of any organisations I am affiliated with. I do not hold financial positions in any companies mentioned. My decision to stop using ChatGPT is a personal, values-based choice, and I am not receiving compensation from any alternative AI platforms. Information cited from news reports is accurate to the best of my knowledge as of 2nd March 2026.

References

Sue Barrett Recent Articles on AI

Australia’s AI Policy Vacuum: When Government Retreats, Citizens Must Fill the Gap

How AI Rental Screening is Worsening Australia’s Housing Crisis

Reference Sources for this Article

OpenAI’s Removal of Military Use Prohibitions

The Intercept (via China Economic Net): https://www.msn.cn/zh-cn/news/other/openai%E5%8E%BB%E5%B9%B4%E5%B7%B2%E5%BC%80%E6%94%BEai%E5%86%9B%E7%94%A8%E9%99%90%E5%88%B6/ar-AA1z6b1q

Hong Kong Wen Wei Po: https://www.wenweipo.com/a/202602/15/AP67b146bbe4b033b0e62c2cae.html

OpenAI’s Pentagon Agreement and GenAI.mil Deployment

Inside Defense: https://insidedefense.com/daily-news/openai-joins-xai-google-housing-its-ai-capability-pentagons-genaimil-platform

Hong Kong Wen Wei Po: https://www.wenweipo.com/a/202602/15/AP67b146bbe4b033b0e62c2cae.html

CNN Business: https://edition.cnn.com/2026/02/27/tech/ai-anthropic-claude-pentagon-donald-trump/index.html

The Pentagon’s Ultimatum to Anthropic

The New York Times: https://www.nytimes.com/2026/02/24/technology/anthropic-claude-pentagon-hegseth-ai.html

CNN Business: https://edition.cnn.com/2026/02/27/tech/ai-anthropic-claude-pentagon-donald-trump/index.html

The New York Times (February 27): https://www.nytimes.com/2026/02/27/technology/trump-anthropic-ai-pentagon.html

Trump Administration’s Ban on Anthropic

CNN Business: https://edition.cnn.com/2026/02/27/tech/ai-anthropic-claude-pentagon-donald-trump/index.html

The New York Times: https://www.nytimes.com/2026/02/27/technology/trump-anthropic-ai-pentagon.html

Claude’s Use in Operation Epic Fury (Iran Strikes)

Reuters (via Lokmat Times): https://www.lokmattimes.com/business/reuters-says-pentagon-used-anthropic-ai-for-strikes-against-iran-though-firm-was-banned/

Reuters (via ThePrint): https://theprint.in/feature/reuters-says-pentagon-used-anthropic-ai-for-strikes-against-iran-though-firm-was-banned/2582957/

Government Access to ChatGPT User Data

Stanford Cyber Policy Center: https://cyber.fsi.stanford.edu/news/first-federal-warrant-chatgpt-records-marks-new-frontier-digital-surveillance

Forbes (via Business & Human Rights Resource Centre): https://www.business-humanrights.org/en/latest-news/forbes-first-federal-warrant-for-chatgpt-records-shows-how-ai-platforms-could-be-used-for-digital-surveillance/

Very insightful, as always.